16.5 Entrance Corner

Addition Theorems

Mutually Exclusive Events

Two or more events associated to a random experiment are mutually exclusive if the occurrence of one of them prevents or denies the occurrence all others.

It follows from the above definition that two or more events associated to a random experiment are mutually exclusive, if there is no elementary event which is favourable to all the events.

Thus, if two events \(A\) and \(B\) are mutually exclusive, then

\(

P(A \cap B)=0

\)

Similarly, if \(A, B\) and \(C\) are mutually exclusive events, then \(P(A \cap B \cap C)=0\).

Clearly, all elementary events associated to a random experimer.t are mutually exclusive as no two or more of them can occur together.

In a single throw of a pair of dice, let us consider the following events:

\(A=\) Getting 8 as the sum.

\(B=\) Getting an even number on first die.

and, \(\quad C=\) Getting 10 as the sum.

Clearly, \(A\) and \(C\) are mutually exclusive but \(A\) and \(B\) are not mutually exclusive. Also, \(B\) and \(C\) are not mutually exclusive. However, \(A, B\) and \(C\) taken all the three together are mutually exclusive.

Note: The events which are not mutually exclusive are known as compatible events.

Let two cards be drawn from a well-shuffled pack of 52 cards.

Consider the following events:

\(

\begin{aligned}

& A=\text { Two cards drawn are red cards } \\

& B=\text { Two cards drawn are kings. }

\end{aligned}

\)

If in a random draw of two cards, we get two red kings (i.e. kings of diamond and heart), then this elementary event is favourable to both \(A\) and \(B\). So, we can say that the events \(A\) and \(B\) are happening simultaneously. So, \(A\) and \(B\) are not mutually exclusive events. Also,

\(

\begin{aligned}

& P(A \cap B) \\

& =\begin{array}{c}

\text { Probability of getting two cards which are red and kings both} \\

\end{array} \\

& =\text { Probability of getting two red kings }=\frac{{ }^2 C_2}{{ }^{52} C_2} \neq 0

\end{aligned}

\)

Breakdown of the Calculation:

To find \(P(A \cap B)\), we look for the number of outcomes that satisfy both conditions divided by the total possible outcomes.

The Intersection \((A \cap B)\) : For a card to be both “Red” and a “King,” it must be either the King of Hearts or the King of Diamonds. There are exactly 2 such cards in a standard deck.

Favorable Outcomes: Since we are drawing two cards, the number of ways to draw both red kings is \({ }^2 C_2\).

\(

{ }^2 C_2=\frac{2!}{2!(2-2)!}=1

\)

Total Outcomes: The total number of ways to draw any two cards from a deck of 52 is \({ }^{52} C_2\).

\(

{ }^{52} C_2=\frac{52 \times 51}{2 \times 1}=1,326

\)

Placing these into your formula:

\(

P(A \cap B)=\frac{{ }^2 C_2}{{ }^{52} C_2}=\frac{1}{1,326}

\)

Since \(\frac{1}{1,326} \neq 0\), you have mathematically proven that the events are not mutually exclusive.

Exhaustive Events

Two or more events associated to a random experiment are exhaustive if their union is the sample space. i.e. events \(A_1, A_2, \ldots, A_n\) associated to a random experiment with sample space \(S\) are exhaustive if \(A_1 \cup A_2 \ldots \cup A_n=S\).

All elementary events associated to a random experiment form a system of mutually exclusive and exhaustive events.

In a single throw of a die, let us consider the following events:

\(A=\) Getting an even number,

\(B=\) Getting an odd number,

\(C=\) Getting a multiple of 3,

\(D=\) Getting a number greater than 3.

Clearly, \(A\) and \(B\) are exhaustive events as \(A \cup B=S\). Also, \(A\) and \(B\) are mutually exclusive. But, \(A\) and \(C\) are not exhaustive and \(B\) and \(C\) are also not exhaustive. \(A, B, C\) and \(D\) are exhaustive but not mutually exclusive.

For any event \(A\) associated to a random experiment \(A\) and \(\bar{A}\) form a pair of exhaustive and mutually exclusive events.

Independent Events

Two events \(A\) and \(B\) associated to a random experiment are independent if the probability of occurrence or non occurrence of \(A\) is not affected by the occurrence or non-occurrence of \(B\).

Let there be a bag containing 6 white and 4 red balls. Two balls are drawn one after the other. Consider the following events:

\(A\) = Getting a white ball in first draw.

\(B\) = Getting a red ball in second draw.

If the ball drawn in the first draw is not replaced in the bag, then events \(A\) and \(B\) are not independent events because the probability of occurrence of \(B\) is increased or decreased according as the first draw results as a white or a red ball. However, \(A\) and \(B\) have become independent events if we replace the ball drawn in the first draw.

Three or more events are independent if the probability of occurrence or non-occurrence of any one of them is not affected by the occurrence or non-occurrence of others.

Note: Events associated to independent random experiments are always independent.

If \(A\) and \(B\) are two mutually exclusive events associated to a random experiment, then the occurrence of any one of these two prevents the occurrence of the other i.e.

If \(A\) occurs, then \(P(B)=0\). If \(B\) occurs, then \(P(A)=0\).

It follows from this that mutually exclusive events associated to a random experiment are not independent and vice-versa.

Theorem 1: (Addition Theorem for two events) If \(A\) and \(B\) are two events associated with a random experiment, then

\(

P(A \cup B)=P(A)+P(B)-P(A \cap B)

\)

corollary: If \(A\) and \(B\) are mutually exclusive events, then

\(

\begin{array}{cc}

& P(A \cap B)=0 \\

\therefore & P(A \cup B)=P(A)+P(B)

\end{array}

\)

This is the addition theorem for mutually exclusive events.

Theorem 2: (Addition Theorem for three events) If A, B, Care three events associated with a random experiment, then

\(

\begin{aligned}

P(A \cup B \cup C)=P(A)+P(B)+ & P(C)-P(A \cap B)-P(B \cap C) \\

– & P(A \cap C)+P(A \cap B \cap C)

\end{aligned}

\)

corollary: If \(A, B, C\) are mutually exclusive events, then

\(

\begin{aligned}

& P(A \cap B)=P(B \cap C)=P(A \cap C)=P(A \cap B \cap C)=0 . \\

\therefore \quad & P(A \cup B \cup C)=P(A)+P(B)+P(C)

\end{aligned}

\)

This is the addition theorem for three mutually exclusive events.

Theorem 3: (Genralized addition theorem) If \(A_1, A_2, \ldots, A_n\) are \(n\) events associated to a random experiment, then

\(

P\left(\bigcup_{i=1}^n A_i\right)=\sum_{i=1}^n P\left(A_i\right)-\sum_{i=1}^n \sum_{j=1}^n P\left(A_i \cap A_j\right) \quad (i<j) +\sum_{i=1}^n \sum_{j=1}^n \sum_{k=1}^n P\left(A_i \cap A_j \cap A_k\right) \quad (i<j<k)

\)

\(

+\ldots \ldots+(-1)^{n-1} P\left(A_1 \cap A_2 \cap \ldots \cap A_n\right)

\)

Theorem 4: Let \(A\) and \(B\) be two events associated to a random experiment. Then,

(i) \(P(\bar{A} \cap B)=P(B)-P(A \cap B)\)

(ii) \(P(A \cap \bar{B})=P(A)-P(A \cap B)\)

(iii) \(P((A \cap \bar{B}) \cup(\bar{A} \cap B))=P(A)+P(B)-2 P(A \cap B)\)

\(

\begin{aligned}

& (A \cap B) \cup(\bar{A} \cap B)=B \\

\Rightarrow \quad & P(A \cap B)+P(\bar{A} \cap B)=P(B) \\

\Rightarrow \quad & P(\bar{A} \cap B)=P(B)-P(A \cap B)

\end{aligned}

\)

Remark:

- \(P(\bar{A} \cap B)\) is known as the probability of occurrence of \(B\) only.

- \(P(A \cap \bar{B})\) is known as the probability of occurrence of \(A\) only.

- \(P((A \cap \bar{B}) \cup(\bar{A} \cap B))\) is known as the probability of occurrence of excatly one of two events \(A\) and \(B\)

- If \(A\) and \(B\) are two events associated to a random experiment such that \(A \subset B\), then \(\bar{A} \cap B \neq \phi\).

\(

\therefore \quad P(\bar{A} \cap B) \geq 0 \Rightarrow P(B)-P(A) \geq 0 \Rightarrow P(A) \leq P(B)

\)

Theorem 5: For any two events \(A\) and \(B\), prove that

\(

P(A \cap B) \leq P(A) \leq P(A \cup B) \leq P(A)+P(B)

\)

Theorem 6: For any two events \(A\) and \(B\), prove that the probability that exactly one of \(A, B\) occurs is given by

\(

P(A)+P(B)-2 P(A \cup B)=P(A \cup B)-P(A \cap B) .

\)

Theorem 7: If \(A, B, C\) are three events, then prove that

(i) \(P\) (Atleast two of \(A, B, C\) occur)

\(

=P(A \cap B)+P(B \cap C)+P(C \cap A)-2 P(A \cap B \cap C)

\)

(ii) \(\quad P\) (Exactly two of \(A, B, C\) occur)

\(

=P(A \cap B)+P(B \cap C)+P(A \cap C)-3 P(A \cap B \cap C)

\)

(iii) \(P\) (Exactly one of \(A, B, C\) occurs)

\(

\begin{aligned}

=P(A)+P(B)+P(C)- & 2 P(A \cap B)-2 P(B \cap C) \\

& -2 P(A \cap C)+3 P(A \cap B \cap C) .

\end{aligned}

\)

Example 1: If \(P(A)=1 / 4, P(B)=1 / 2, P(A \cup B)=5 / 8\), then \(P(A \cap B)\) is

(a) \(3 / 8\)

(b) \(1 / 8\)

(c) \(2 / 8\)

(d) \(5 / 8\)

Solution: (b) We have,

\(

\begin{aligned}

& P(A)=\frac{1}{4}, P(B)=\frac{1}{2} \text { and } P(A \cup B)=\frac{5}{8} . \\

\therefore \quad & P(A \cap B)=P(A)+P(B)-P(A \cup B) \\

\Rightarrow \quad & P(A \cap B)=\frac{1}{4}+\frac{1}{2}-\frac{5}{8}=\frac{1}{8}

\end{aligned}

\)

Example 2: \(A\) die is thrown. Let \(A\) be the event that the number obtained is greater than 3 . Let \(B\) be the event that the number obtained is less than 5. Then, \(P(A \cup B)\) is [AIEEE 2008]

(a) 1

(b) \(2 / 5\)

(c) \(3 / 5\)

(d) 0

Solution: (a) We have,

\(

P(A)=\frac{3}{6}=\frac{1}{2}, P(B)=\frac{4}{6}=\frac{2}{3}

\)

and, \(P(A \cap B)=\) Probability of getting a number 3 and less than 5

\(

\begin{array}{ll}

& =\text { Probability of getting } 4=\frac{1}{6} \\

\therefore \quad & P(A \cup B)=P(A)+P(B)-P(A \cap B)=\frac{1}{2}+\frac{2}{3}-\frac{1}{6}=1

\end{array}

\)

Example 3: For three events \(A, B\) and \(C, P\) (exactly one of the events \(A\) or \(B\) occurs \()=P\) (exactly one of the events \(B\) or \(C\) occurs \() =P\) (exactly one of the events \(C\) or \(A\) occurs) \(=p\) and \(P\) (all the three events occur simultaneously) \(=p^2\), where \(0<p<1 / 2\). Then the probability of atleast one of the three events \(A, B\) and \(C\) occurring is [IIT 1996]

(a) \(\frac{3 p+2 p^2}{2}\)

(b) \(\frac{p+3 p^2}{2}\)

(c) \(\frac{3 p+p^2}{2}\)

(d) \(\frac{3 p+2 p^2}{4}\)

Solution: (a) Step 1: Translate the Given Conditions into Equations

From Theorem 6, you know the probability of exactly one of two events \(A\) and \(B\) occurring is \(P(A)+P(B)-2 P(A \cap B)\).

According to the problem:

\(P(A)+P(B)-2 P(A \cap B)=p \dots(1)\)

\(P(B)+P(C)-2 P(B \cap C)=p \dots(2)\)

\(P(C)+P(A)-2 P(C \cap A)=p \dots(3)\)

\(P(A \cap B \cap C)=p^2 \dots(4)\)

Step 2: Sum the First Three Equations

Adding equations (1), (2), and (3) together:

\(

(P(A)+P(B)-2 P(A \cap B))+(P(B)+P(C)-2 P(B \cap C))+(P(C)+P(A)-2P(C \cap A))=3 p

\)

Group the terms:

\(

2[P(A)+P(B)+P(C)]-2[P(A \cap B)+P(B \cap C)+P(C \cap A)]=3 p

\)

Divide the entire equation by 2 :

\(

[P(A)+P(B)+P(C)]-[P(A \cap B)+P(B \cap C)+P(C \cap A)]=\frac{3 p}{2}

\)

Step 3: Apply the Addition Theorem for Three Events

We are looking for \(P(A \cup B \cup C)\). According to your Theorem:

\(

P(A \cup B \cup C)=\underbrace{P(A)+P(B)+P(C)-[P(A \cap B)+P(B \cap C)+P(C \cap A)]}_{\text {This is exactly what we found in Step 2! }}+P

\)

Substitute the values:

The grouped term \(=\frac{3 p}{2}\)

\(P(A \cap B \cap C)=p^2\)

\(

P(A \cup B \cup C)=\frac{3 p}{2}+p^2

\)

Step 4: Simplify to Match the Options

To get a single fraction, find a common denominator:

\(

P(A \cup B \cup C)=\frac{3 p+2 p^2}{2}

\)

Example 4: Let \(A, B\) and \(C\) be three events such that \(P(A)=0.3, \quad P(B)=0.4, \quad P(C)=0.8, \quad P(A \cap B)=0.08\), \(P(A \cap C)=0.28, P(A \cap B \cap C)=0.09\). If \(P(A \cup B \cup C) \geq 0.75\), then show that \(P(B \cap C)\) satisfies [CEE (Delhi) 2009]

(a) \(P(B \cap C) \leq 0.23\)

(b) \(P(B \cap C) \leq 0.48\)

(c) \(0.23 \leq P(B \cap C) \leq 0.48\)

(d) \(0.23 \leq P(B \cap C) \geq 0.48\)

Solution: (c) We know that the probability of occurrence of an event is always less than or equal to 1 and it is given that \(P(A \cup B \cup C) \geq 0.75\)

\(

\begin{array}{ll}

\therefore & 0.75 \leq P(A \cup B \cup C) \leq 1 \\

\Rightarrow & 0.75 \leq P(A)+P(B)+P(C)-P(A \cap B)-P(B \cap C) \\

& \quad-P(A \cap C)+P(A \cap B \cap C) \leq 1 \\

\Rightarrow & 0.75 \leq 0.3+0.4+0.8-0.08-P(B \cap C)-0.28+0.09 \leq 1 \\

\Rightarrow & 0.75 \leq 1.59-0.36-P(B \cap C) \leq 1 \\

\Rightarrow & 0.75 \leq 1.23-P(B \cap C) \leq 1 \\

\Rightarrow & -0.48 \leq-P(B \cap C) \leq-0.23 \Rightarrow 0.23 \leq P(B \cap C) \leq 0.48

\end{array}

\)

Explanation: Step 1: State the Addition Theorem

Recall the formula for three events:

\(

P(A \cup B \cup C)=P(A)+P(B)+P(C)-[P(A \cap B)+P(B \cap C)+P(C \cap A)]+P

\)

Step 2: Substitute the Given Values

Plug in the values provided in the question:

\(

\begin{aligned}

& P(A)=0.3 \\

& P(B)=0.4 \\

& P(C)=0.8 \\

& P(A \cap B)=0.08 \\

& P(A \cap C)=0.28 \\

& P(A \cap B \cap C)=0.09

\end{aligned}

\)

Let \(x=P(B \cap C)\). The equation becomes:

\(

P(A \cup B \cup C)=0.3+0.4+0.8-[0.08+x+0.28]+0.09

\)

Simplify the constant terms:

\(

\begin{gathered}

P(A \cup B \cup C)=1.5-[0.36+x]+0.09 \\

P(A \cup B \cup C)=1.5-0.36-x+0.09=1.23-x

\end{gathered}

\)

Step 3: Find the Lower Bound

The problem states that \(P(A \cup B \cup C) \geq 0.75\).

\(

\begin{gathered}

1.23-x \geq 0.75 \\

-x \geq 0.75-1.23 \\

-x \geq-0.48 \\

x \leq 0.48

\end{gathered}

\)

Step 4: Find the Upper Bound

We also know from your Theorem 5 that any probability cannot exceed 1. Thus, \(P(A \cup B \cup C) \leq 1\).

\(

\begin{gathered}

1.23-x \leq 1 \\

-x \leq 1-1.23 \\

-x \leq-0.23 \\

x \geq 0.23

\end{gathered}

\)

Step 5: Combine the Results

Combining the two inequalities:

\(

0.23 \leq P(B \cap C) \leq 0.48

\)

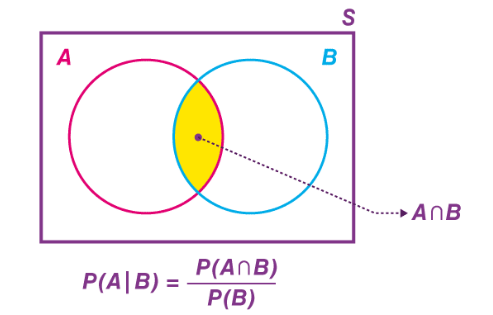

Multiplication Theorems on Probability

Let \(A\) and \(B\) be two events associated with a random experiment. Then, the probability of occurrence of event \(A\) under the condition that \(B\) has already occurred and \(P(B) \neq 0\), is called the conditional probability and it is denoted by \(P(A / B)\). Thus, we have \(P(A / B)=\) Probability of occurrence of \(A\) given that \(B\) has already occurred.

Similarly, \(P(B / A)\) when \(P(A) \neq 0\) is defined as the probability of occurrence of event \(B\) when \(A\) has already occurred.

Let there be a bag containing 5 white and 4 red balls. Two balls are drawn from the bag one after the other without replacement. Consider the following events:

\(

\begin{aligned}

& A=\text { Drawing a white ball in the first draw, } \\

& B=\text { Drawing a red ball in the second draw. }

\end{aligned}

\)

Now,

\(

\begin{aligned}

& P(B / A)= \text { Probability of drawing a red ball in second } \\

& \text { draw given that a white ball has already } \\

& \text { been drawn in the first draw } \\

& \Rightarrow \quad P(B / A)= \text { Probability of drawing a red ball from a bag } \\

& \text { containing } 4 \text { white and } 4 \text { red balls } \\

& \Rightarrow \quad P(B / A)=\frac{4}{8}=\frac{1}{2}

\end{aligned}

\)

For this random experiment \(P(A / B)\) is not meaningful because \(A\) cannot occur after the occurrence of event \(B\).

In the above discussion events \(A\) and \(B\) were subsets of two different sample spaces as they are outcomes of two different trials which are performed one after the other.

Consider the random experiment of throwing a pair of dice and two events associated with it given by

\(

\begin{aligned}

A & =\text { The sum of the numbers on two dice is } 8 \\

& ={(2,6),(6,2),(3,5),(5,3),(4,4)} \\

B & =\text { There is an even number on first die. } \\

& ={(2,1), \ldots,(2,6),(4,1), \ldots,(4,6),(6,1), \ldots,(6,6)}

\end{aligned}

\)

In this case, events \(A\) and \(B\) are the subsets of the same sample space. So, we have the following meanings for \(P(A / B)\).

We have,

\(

P(A / B)=\text { Probability of occurrence of } A \text { when } B \text { occurs }

\)

\(

P(A / B)=\frac{\begin{array}{c}

\text { Number of elementary events } \\

B \text { in which are favourable to } A

\end{array}}{\text { Number of elementary events in } B}=\frac{3}{18}

\)

\(P(B / A)=\) Probability of occurrence of \(B\) when \(A\) occurs.

\(

P(B / A)=\frac{\begin{array}{l}

\text { Number of elementary events in } \\

\text { A which are favourable to } B

\end{array}}{\text { Number of elementary events in } A}=\frac{3}{5}

\)

Example 5: A die is thrown twice and the sum of the numbers appearing is observed to be 6. The conditional probability that the number 4 has appeared at least once, is

(a) \(\frac{3}{5}\)

(b) \(\frac{2}{5}\)

(c) \(\frac{5}{36}\)

(d) \(\frac{1}{36}\)

Solution: (b) To solve this, we use the formula for conditional probability:

\(

P(A \mid B)=\frac{P(A \cap B)}{P(B)}

\)

Where:

Event \(B\) : The sum of the numbers is 6.

Event \({A}\) : The number 4 appears at least once.

Step 1: Identify the Sample Space for Event \(B\)

First, let’s list all the possible outcomes (pairs) where the sum of the two dice equals 6:

\((1,5)\), \((2,4)\), \((3,3)\), \((4,2)\), \((5,1)\)

There are 5 possible outcomes. So, \(n(B)=5\).

Step 2: Identify the Intersection (\(A \cap B\))

Now, we look within Event \(B\) to see how many of those outcomes include the number 4 at least once:

\((2,4)\), \((4,2)\)

There are 2 outcomes that satisfy both conditions. So, \(n(A \cap B)=2\).

Step 3: Apply the Formula

Since we are looking for the probability of \(A\) given that \(B\) has already occurred, we divide the number of favorable outcomes by the total number of outcomes in the reduced sample space \(B\) :

\(

P(A \mid B)=\frac{n(A \cap B)}{n(B)}=\frac{2}{5}

\)

Example 6: Two integers are selected at random from integers 1 to 11. If the sum is even, the probability that both the numbers are odd, is

(a) \(\frac{3}{5}\)

(b) \(\frac{2}{5}\)

(c) \(\frac{1}{5}\)

(d) \(\frac{4}{5}\)

Solution: (a) To solve this, we again use the concept of conditional probability. We are looking for the probability that both numbers are odd, given that their sum is even.

Step 1: Analyze the Set

The integers from 1 to 11 are: \(\{1,2,3,4,5,6,7,8,9,10,11\}\).

Total numbers: 11

Odd numbers \((O)\) : \(\{1,3,5,7,9,11\}\) – there are 6 odd numbers.

Even numbers \((E):\{2,4,6,8,10\}\) – there are 5 even numbers.

Step 2: Define Event \(B\) (The Sum is Even)

A sum of two integers is even in two cases:

Both numbers are Odd \((O+O=\mathrm{Even})\)

Both numbers are Even (\(E+E=\) Even)

We calculate the number of ways to choose 2 numbers from each group:

Ways to choose 2 Odd numbers: \(\binom{6}{2}=\frac{6 \times 5}{2 \times 1}=15\)

Ways to choose 2 Even numbers: \(\binom{5}{2}=\frac{5 \times 4}{2 \times 1}=10\)

Total favorable outcomes for a sum to be even: \(15+10=25\).

Step 3: Define Event \(A\) (Both Numbers are Odd)

We want the cases where both numbers are odd within the set where the sum is already even.

Number of ways both are odd: 15 (calculated in the previous step).

Step 4: Apply the Conditional Probability Formula

\(

\begin{aligned}

P(\text { Both Odd } \mid \text { Sum is Even }) & =\frac{n(\text { Both Odd } \cap \text { Sum is Even })}{n(\text { Sum is Even })} \\

P & =\frac{15}{25}

\end{aligned}

\)

Reducing the fraction by 5:

\(

P=\frac{3}{5}

\)

Theorem 1:

If \(A\) and \(B\) are two events associated with a random experiment, then

\(

P(A \cap B)=P(A) P(B / A), \text { if } P(A) \neq 0 \dots(i)

\)

\(

P(A \cap B)=P(B) P(A / B), \text { if } P(B) \neq 0 \dots(ii)

\)

Note 1: From (i) and (ii) in the above theorem, we find that

\(

P(B / A)=\frac{P(A \cap B)}{P(A)} \text { and } P(A / B)=\frac{P(A \cap B)}{P(B)}

\)

Note 2: If \(A\) and \(B\) are independent events, then \(P(A / B)=P(A)\) and \(P(B / A)=P(B)\).

\(

\therefore \quad P(A \cap B)=P(A) P(B)

\)

Also,

\(

\begin{array}{rlrl}

& & P(A \cup B)=P(A)+P(B)-P(A \cap B) \\

\Rightarrow & P(A \cup B)=P(A)+P(B)-P(A) P(B) \\

\Rightarrow & P(A \cup B)=1-[1-P(A)-P(B)+P(A) P(B)] \\

\Rightarrow & P(A \cup B)=1-[(1-P(\bar{A}))(1-P(\bar{B}))] \\

\Rightarrow & P(A \cup B)=1-P(\bar{A}) P(\bar{B})

\end{array}

\)

Example 7: A bag contains 10 white and 15 black balls. If two balls are drawn in succession without replacement, then the probability that first is white and second is black, is

(a) \(\frac{2}{5}\)

(b) \(\frac{5}{8}\)

(c) \(\frac{1}{4}\)

(d) \(\frac{1}{5}\)

Solution: (c) Since the balls are drawn without replacement, the outcome of the first draw changes the total number of balls available for the second draw. This is a classic application of the Multiplication Theorem for Dependent Events.

Step 1: Probability of the First Event (White Ball)

The bag contains:

White balls \((W)=10\)

Black balls \((B)=15\)

Total balls \(=25\)

The probability that the first ball is white is:

\(

P\left(W_1\right)=\frac{10}{25}=\frac{2}{5}

\)

Step 2: Probability of the Second Event (Black Ball)

Since the first white ball was not replaced, the contents of the bag change:

Remaining white balls \(=9\)

Remaining black balls = 15

New Total balls = 24

The probability that the second ball is black, given the first was white, is:

\(

P\left(B_2 \mid W_1\right)=\frac{15}{24}=\frac{5}{8}

\)

Step 3: Apply the Multiplication Theorem

To find the probability of both events happening in this specific order (\(W\) then \(B\)):

\(

\begin{gathered}

P\left(W_1 \cap B_2\right)=P\left(W_1\right) \cdot P\left(B_2 \mid W_1\right) \\

P=\frac{2}{5} \cdot \frac{5}{8}

\end{gathered}

\)

By canceling out the 5s:

\(

P=\frac{2}{8}=\frac{1}{4}

\)

Theorem 2: (Extension of multiplication theorem)

If \(A_1, A_2, \ldots, A_n\) are \(n\) events associated with a random experiment, then

\(

\begin{aligned}

& P\left(A_1 \cap A_2 \cap A_3 \ldots \cap A_n\right) \\

& =P\left(A_1\right) P\left(A_2 / A_1\right) P\left(A_3 / A_1 \cap A_2\right) \ldots \ldots \ldots \ldots \\

& \quad \ldots \ldots . P\left(A_n / A_1 \cap A_2 \cap \ldots . \cap A_{n-1}\right),

\end{aligned}

\)

where \(P\left(A_i / A_1 \cap A_2 \ldots \cap A_{i-1}\right)\) represents the conditional probability of the occurrence of event \(A_i\), given that the events \(A_1, A_2, \ldots, A_{i-1}\) have already occurred.

Example 8: The probability of drawing a diamond card in each of the two consecutive draws from a well shuffled pack of cards, if the card drawn is not replaced after the first draw, is

(a) \(\frac{4}{17}\)

(b) \(\frac{13}{17}\)

(c) \(\frac{1}{17}\)

(d) none of these

Solution: (c) This problem is another classic case of dependent events, as the first card is not replaced before the second draw.

Step 1: Probability of the First Diamond

A standard deck has 52 cards, and there are 13 cards in each suit (Diamonds, Hearts, Clubs, Spades).

Total cards = 52

Diamond cards \((D)=13\)

The probability of drawing a diamond on the first draw is:

\(

P\left(D_1\right)=\frac{13}{52}=\frac{1}{4}

\)

Step 2: Probability of the Second Diamond

Since the first diamond was not replaced, the deck composition changes for the second draw:

Remaining Diamonds \(=12\)

Remaining Total cards = 51

The probability of drawing a second diamond, given the first was a diamond, is:

\(

P\left(D_2 \mid D_1\right)=\frac{12}{51}

\)

Step 3: Apply the Multiplication Theorem

To find the probability of both cards being diamonds (\(D_1 \cap D_2\)), we multiply the probabilities:

\(

\begin{gathered}

P\left(D_1 \cap D_2\right)=P\left(D_1\right) \cdot P\left(D_2 \mid D_1\right) \\

P=\frac{1}{4} \cdot \frac{12}{51}=\frac{1}{17}

\end{gathered}

\)

Example 9: Two balls are drawn from an urn containing 2 white, 3 red and 4 black balls one by one without replacement. The probability that at least one ball is red, is

(a) \(\frac{7}{12}\)

(b) \(\frac{5}{12}\)

(c) \(\frac{2}{3}\)

(d) \(\frac{5}{8}\)

Solution: (a) When you see the phrase “at least one,” it is almost always easier to calculate the probability of the opposite event (the complement) and subtract it from 1.

The opposite of “at least one ball is red” is “no balls are red.”

Step 1: Analyze

White \((W)=2\)

\(\operatorname{Red}(R)=3\)

Black \((B)=4\)

Total Balls = 9

Non-Red Balls \((W+B)=6\)

Step 2: Calculate \(P\) (No Red Balls)

We are drawing two balls without replacement, so the events are dependent.

Probability first ball is not red:

\(

P\left(\text { Not } R_1\right)=\frac{6}{9}=\frac{2}{3}

\)

Probability second ball is not red (given the first wasn’t):

There are now 5 non-red balls left out of 8 total balls.

\(

P\left(\operatorname{Not} R_2 \mid \operatorname{Not} R_1\right)=\frac{5}{8}

\)

Multiply for the intersection:

\(

P(\text { Zero Red })=\frac{2}{3} \cdot \frac{5}{8}=\frac{10}{24}=\frac{5}{12}

\)

Step 3: Find the Complement

Now, subtract the probability of drawing zero red balls from the total probability (1):

\(

\begin{gathered}

P(\text { At least one Red })=1-P(\text { Zero Red }) \\

P=1-\frac{5}{12} \\

P=\frac{12-5}{12}=\frac{7}{12}

\end{gathered}

\)

Example 10: It is given that the events \(A\) and \(B\) are such that \(P(A)=\frac{1}{4}, P(A / B)=\frac{1}{2}\) and \(P(B / A)=\frac{2}{3}\). Then, \(P(B)\) is [ĄIEEE 2008]

(a) \(\frac{2}{3}\)

(b) \(\frac{1}{2}\)

(c) \(\frac{1}{6}\)

(d) \(\frac{1}{3}\)

Solution: (d) To find \(P(B)\), we need to use the Multiplication Theorem for dependent events. This theorem relates the intersection of two events to their conditional probabilities.

Step 1: Use the Multiplication Theorem for \(P(A \cap B)\)

We know that the probability of both \(A\) and \(B\) occuring can be written in two ways:

\(P(A \cap B)=P(A) \cdot P(B \mid A)\)

\(P(A \cap B)=P(B) \cdot P(A \mid B)\)

Step 2: Calculate the value of \(P(A \cap B)\)

Using the first formula and the values provided in the question \(\left(P(A)=\frac{1}{4}\right.\) and \(P(B \mid A)=\frac{2}{3}\)):

\(

\begin{gathered}

P(A \cap B)=\frac{1}{4} \cdot \frac{2}{3} \\

P(A \cap B)=\frac{2}{12}=\frac{1}{6}

\end{gathered}

\)

Step 3: Solve for \(P(B)\)

Now, we use the second version of the multiplication formula and substitute the value we just found \(\left(P(A \cap B)=\frac{1}{6}\right)\) along with \(P(A \mid B)=\frac{1}{2}\) :

\(

\begin{gathered}

P(A \cap B)=P(B) \cdot P(A \mid B) \\

\frac{1}{6}=P(B) \cdot \frac{1}{2}

\end{gathered}

\)

To isolate \(P(B)\), multiply both sides by 2 :

\(

\begin{aligned}

& P(B)=\frac{1}{6} \cdot 2 \\

& P(B)=\frac{2}{6}=\frac{1}{3}

\end{aligned}

\)

Example 11: One ticket is selected at random from 50 tickets numbered \(00,01,02, \ldots, 49\). Then the probability that the sum of the digits on the selected ticket is 8, given that the product of these digits is zero, equals [AIEEE 2009]

(a) \(\frac{1}{14}\)

(b) \(\frac{1}{7}\)

(c) \(\frac{5}{14}\)

(d) \(\frac{1}{50}\)

Solution: (a) To solve this, we use the definition of conditional probability. We are looking for the probability that the sum of the digits is 8, given that the product of the digits is zero.

The formula is:

\(

P(A \mid B)=\frac{n(A \cap B)}{n(B)}

\)

Step 1: Identify Event \(B\) (Product of digits is zero)

A two-digit number (from 00 to 49) has a product of zero if at least one of its digits is 0. Let’s list these outcomes:

Numbers starting with \(0: 00,01,02,03,04,05,06,07,08,09\) (10 tickets)

Numbers ending with 0 (within the range up to 49): \(10,20,30,40\) (4 tickets)

Note: “00” is counted in the first list. There are no overlaps other than that.

Total number of tickets in Event \(B: n(B)=10+4=14\).

Step 2: Identify Event \(A \cap B\) (Sum is 8 AND Product is 0)

Now, we look through the 14 tickets identified in Step 1 to see which ones have digits that add up to 8:

From the first list (00-09): Only \(08(0+8=8)\).

From the second list (10-40): Only 80 would work, but the tickets only go up to 49. Therefore, there are no tickets in this sub-list where the sum is 8.

Total number of tickets in the intersection: \(n(A \cap B)=1\).

Step 3: Apply the Formula

\(

P(A \mid B)=\frac{1}{14}

\)

More on Independent Events

We know that two events \(A\) and \(B\) are independent iff \(P(B / A)=P(B)\) and \(P(A / B)=P(A)\).

Also, \(P(A \cap B)=P(A) P(B)\) iff \(A\) and \(B\) are independent events.

For example, Independent Events (Sampling with Replacement)

Suppose you have a bag with 3 Red balls and 7 Blue balls. You draw one ball, note its color, put it back, and draw a second ball.

Event A: First ball is Red \((P(A)=3 / 10)\).

Event B: Second ball is \(\operatorname{Red}(P(B)=3 / 10)\).

Because you replaced the first ball, the deck hasn’t changed. The probability of the second draw is entirely unaffected by the first.

\(

P(A \cap B)=\frac{3}{10} \times \frac{3}{10}=\frac{9}{100}=0.09

\)

Pairwise Independent Events

Let \(A_1, A_2, \ldots, A_n\) be \(n\) events associated to a random experiment. These events are said to be pairwise independent if

\(

P\left(A_i \cap A_j\right)=P\left(A_i\right) P\left(A_j\right) \text { for } i \neq j ; i, j=1,2, \ldots, n

\)

For Example, Two Coin Tosses

Consider tossing two fair coins.

Sample Space \(S=\{H H, H T, T H, T T\}\)

Event \(A\) : First toss is Head \(\{H H, H T\} \rightarrow P(A)=1 / 2\)

Event \(B\) : Second toss is Head \(\{H H, T H\} \rightarrow P(B)=1 / 2\)

Event \(C\) : Exactly one Head occurs \(\{H T, T H\} \rightarrow P(C)=2 / 4=1 / 2\)

Pairwise Check:

\(P(A \cap B)=P(\{H H\})=1 / 4\) (Equal to \(1 / 2 \cdot 1 / 2\)) – Independent

\(P(B \cap C)=P(\{T H\})=1 / 4\) (Equal to \(1 / 2 \cdot 1 / 2\)) – Independent

\(P(A \cap C)=P(\{H T\})=1 / 4\) (Equal to \(1 / 2 \cdot 1 / 2\)) – Independent

Mutual Check:

Can \(A, B\), and \(C\) all happen?

\(A \cap B\) means \(H H\).

But \(C\) means exactly one head.

It is impossible for both \(H H\) and “exactly one head” to happen.

\(P(A \cap B \cap C)=0\).

Since \(0 \neq(1 / 2 \cdot 1 / 2 \cdot 1 / 2)\), they are not mutually independent. Knowing \(A\) and \(B\) happened tells you for certain that \(C\) did not happen!

Mutually Independent Events

Let \(A_1, A_2, \ldots, A_n\) be \(n\) events associated to a random experiment. These events are said to be mutually independent if the probability of the simultaneous occurrence of any finite number of them is equal to the product of their separate probabilities i.e.

\(

\begin{gathered}

P\left(A_i \cap A_j\right)=P\left(A_i\right) P\left(A_j\right), \text { for } i \neq j ; i, j=1,2, \ldots, n \\

P\left(A_i \cap A_j \cap A_k\right)=P\left(A_i\right) P\left(A_j\right) P\left(A_k\right), \\

\quad \text { for } i \neq j \neq k ; i, j, k=1,2, \ldots, n \\

\vdots \\

P\left(A_1 \cap A_2 \ldots \cap A_n\right)=P\left(A_1\right) P\left(A_2\right) \ldots P\left(A_n\right)

\end{gathered}

\)

For Example, Rolling a Die and Tossing Two Coins

This is a perfect example of mutual independence because the physical mechanisms are entirely separate. Let:

Event \(A\) : Rolling a 6 on a die \((P(A)=1 / 6)\)

Event \(B\) : Tossing a Head on Coin \(1(P(B)=1 / 2)\)

Event \(C\) : Tossing a Head on Coin \(2(P(C)=1 / 2)\)

Why they are mutually independent:

Pairwise: \(P(A \cap B)=\frac{1}{12}=P(A) P(B)\). The die doesn’t affect Coin 1.

Triples: \(P(A \cap B \cap C)=\frac{1}{24}\). This is equal to \(\frac{1}{6} \times \frac{1}{2} \times \frac{1}{2}\).

Remark

If \(A_1, A_2 \ldots A_n\) are pairwise independent events, then the total number of conditions for their pairwise independence is \({ }^n \mathrm{C}_2\) whereas for their mutual independences there must be \({ }^n C_2+{ }^n C_3+\ldots+{ }^n C_n=2^n-n-1\) condition.

For Example, Three Events (\(n=3\))

For events \(A, B\), and \(C\), the number of conditions required are:

Pairwise Independence: You only check pairs.

\(\binom{3}{2}=3\) conditions: \(P(A \cap B), P(B \cap C)\), and \(P(A \cap C)\).

Mutual Independence: You check pairs AND the triple.

\(\binom{3}{2}+\binom{3}{3}=3+1=4\) conditions.

Formula check: \(2^3-3-1=8-4=4\).

Theorem

If \(A\) and \(B\) are independent events associated with a random experiment, then prove that

(i) \(\bar{A}\) and \({B}\) are independent events

(ii) \({A}\) and \(\bar{B}\) are independent events

(iii) \(\bar{A}\) and \(\bar{B}\) are also independent events.

Solution: If two events \(A\) and \(B\) have no “influence” over one another (independence), it stands to reason that the occurrence or non-occurrence of one shouldn’t affect the other.

In probability, two events are independent if and only if:

\(

P(A \cap B)=P(A) \cdot P(B)

\)

(i) Prove \(\bar{A}\) and \(B\) are independent

To prove this, we need to show that \(P(\bar{A} \cap B)=P(\bar{A}) \cdot P(B)\).

Observe the Relationship: The event \(B\) can be split into two mutually exclusive parts: the part that overlaps with \(A\) and the part that doesn’t.

\(

B=(A \cap B) \cup(\bar{A} \cap B)

\)

Apply Probability: Since these are disjoint, we can write:

\(

P(B)=P(A \cap B)+P(\bar{A} \cap B)

\)

Substitute Independence: Since \(A\) and \(B\) are independent, \(P(A \cap B)=P(A) P(B)\) :

\(

P(B)=P(A) P(B)+P(\bar{A} \cap B)

\)

Isolate \(P(\bar{A} \cap B)\) :

\(

\begin{gathered}

P(\bar{A} \cap B)=P(B)-P(A) P(B) \\

P(\bar{A} \cap B)=P(B)(1-P(A))

\end{gathered}

\)

Conclusion: Since \(1-P(A)=P(\bar{A})\) :

\(

P(\bar{A} \cap B)=P(B) \cdot P(\bar{A})

\)

(ii) Prove \(A\) and \(\bar{B}\) are independent

This follows the exact same logic as above, just swapping the roles of \(A\) and \(B\).

\(P(A)=P(A \cap B)+P(A \cap \bar{B})\)

\(P(A)=P(A) P(B)+P(A \cap \bar{B})\)

\(P(A \cap \bar{B})=P(A)-P(A) P(B)\)

\(P(A \cap \bar{B})=P(A)(1-P(B))\)

Conclusion: \(P(A \cap \bar{B})=P(A) \cdot P(\bar{B})\)

(iii) Prove \(A\) and \(B\) are independent

This one is often the most “satisfying” to prove because it uses De Morgan’s Laws. We want to show \(P(\bar{A} \cap \bar{B})=P(\bar{A}) \cdot P(\bar{B})\).

De Morgan’s Law: Recall that \(\bar{A} \cap \bar{B}\) is the same as \(\overline{A \cup B}\) (the complement of the union).

Complement Rule:

\(

P(\bar{A} \cap \bar{B})=1-P(A \cup B)

\)

Addition Rule:

\(

P(\bar{A} \cap \bar{B})=1-[P(A)+P(B)-P(A \cap B)]

\)

Substitute Independence:

\(

P(\bar{A} \cap \bar{B})=1-P(A)-P(B)+P(A) P(B)

\)

Factor by Grouping:

\(

\begin{gathered}

P(\bar{A} \cap \bar{B})=(1-P(A))-P(B)(1-P(A)) \\

P(\bar{A} \cap \bar{B})=(1-P(A))(1-P(B))

\end{gathered}

\)

Conclusion:

\(

P(\bar{A} \cap \bar{B})=P(\bar{A}) \cdot P(\bar{B})

\)

Remarks

- In what follows the term independent events will mean mutually independent events.

- If \(A\) and \(B\) are independent events associated to \(a\) random experiment, then

Probability of occurrence of at least one event

\(

\begin{aligned}

& =P(A \cup B) \\

& =P(A)+P(B)-P(A \cap B) \\

& =P(A)+P(B)-P(A) P(B) \\

& =1-[1-P(A)-P(B)+P(A)+P(B)] \\

& =1-(1-P(A))(1-P(B))=1-P(\bar{A}) P(\bar{B})

\end{aligned}

\) - If \(A_1, A_2, \ldots A_n\) are independent events associated with a random experiment, then

Probability of occurrence of at least one

\(

\begin{aligned}

& =P\left(A_1 \cup A_2 \cup \ldots \cup A_n\right) \\

& =1-P\left(\overline{A_1 \cup A_2 \cup \ldots \cup A_n}\right) \\

& =1-P\left(\bar{A}_1 \cap \bar{A}_2 \cap \ldots \cap \bar{A}_n\right)=1-P\left(\bar{A}_1\right) P\left(\bar{A}_2\right) \ldots P\left(\bar{A}_n\right)

\end{aligned}

\)

Example 12: Let \(A\) and \(B\) be two events such that \(P(\overline{A \cup B})=\frac{1}{6}, P(A \cap B)=\frac{1}{4}\) and \(P(\bar{A})=\frac{1}{4}\), where \(\bar{A}\) stands for complement of event \(A\). Then events \(A\) and \(B\) are [AIEEE 2005]

(a) mutually exclusive and independent

(b) independent but not equally likely

(c) equally likely but not independent

(d) equally likely and mutually exclusive

Solution: (b) To determine the relationship between events \(A\) and \(B\), we need to find their individual probabilities and check the conditions for independence and mutual exclusivity.

Step 1: Find \(P(A)\) and \(P(B)\)

We are given the probability of the complement of \(\boldsymbol{A}\) :

\(

P(\bar{A})=\frac{1}{4} \Longrightarrow P(A)=1-\frac{1}{4}=\frac{3}{4}

\)

Next, we use the given \(P(\overline{A \cup B})\) to find the union:

\(

P(\overline{A \cup B})=\frac{1}{6} \Longrightarrow P(A \cup B)=1-\frac{1}{6}=\frac{5}{6}

\)

Now, we use the Addition Theorem to find \(P(B)\) :

\(

P(A \cup B)=P(A)+P(B)-P(A \cap B)

\)

\(

\begin{gathered}

\frac{5}{6}=\frac{3}{4}+P(B)-\frac{1}{4} \\

\frac{5}{6}=\frac{2}{4}+P(B) \\

\frac{5}{6}=\frac{1}{2}+P(B) \\

P(B)=\frac{5}{6}-\frac{3}{6}=\frac{2}{6}=\frac{1}{3}

\end{gathered}

\)

Step 2: Check for “Equally Likely”

\(P(A)=\frac{3}{4}\)

\(P(B)=\frac{1}{3}\)

Since \(P(A) \neq P(B)\), the events are not equally likely.

Step 3: Check for “Independent”

Events are independent if \(P(A \cap B)=P(A) \cdot P(B)\).

Given \(P(A \cap B)=\frac{1}{4}\)

Product \(P(A) \cdot P(B)=\frac{3}{4} \cdot \frac{1}{3}=\frac{1}{4}\)

Since \(\frac{1}{4}=\frac{1}{4}\), the events are independent.

Step 4: Check for “Mutually Exclusive”

Events are mutually exclusive if \(P(A \cap B)=0\).

Since \(P(A \cap B)=\frac{1}{4} \neq 0\), they are not mutually exclusive.

The events are independent but have different probabilities (not equally likely).

Example 13: Two aeroplanes I and II bomb a target in succession. The probabilities of I and II scoring a hit correctly are 0.3 and 0.2 respectively. The second plane will bomb only if the first misses the target. The probability that the target is hit by second plane is [AIEEE 2007]

(a) 0.2

(b) 0.7

(c) 0.06

(d) 0.14

Solution: (d) This problem relies on the Multiplication Theorem for Dependent Events, specifically focusing on the sequence of events described in the “only if” condition.

Step 1: Define the Events

Let:

\(H_1\) : Plane I hits the target. \(P\left(H_1\right)=0.3\)

\(M_1\) : Plane I misses the target. \(P\left(M_1\right)=1-0.3=0.7\)

\(H_2\) : Plane II hits the target. \(P\left(H_2\right)=0.2\)

Step 2: Analyze the Condition

The problem states that the second plane will bomb only if the first misses. This means we are looking for the probability of a specific sequence: (First Misses) AND (Second Hits).

This is represented as:

\(

P\left(M_1 \cap H_2\right)

\)

Step 3: Apply the Multiplication Theorem

Since the planes’ scoring abilities are independent, the probability of the second plane hitting after the first misses is:

\(

P\left(M_1 \cap H_2\right)=P\left(M_1\right) \times P\left(H_2\right)

\)

\(

\begin{aligned}

&P=0.7 \times 0.2\\

&P=0.14

\end{aligned}

\)

The probability that the target is hit by the second plane is \(\mathbf{0 . 1 4}\).

The Law of Total Probability

Theorem (Law of total Probability): Let \(S\) be the sample space and let \(E_1, E_2, \ldots, E_n\) be \(n\) mutually exclusive and exhaustive events associated with a random experiment. If \(A\) is any event which occurs with \(E_1\) or \(E_2\) or … or \(E_n\), then

\(

\begin{aligned}

P(A)=P\left(E_1\right) P\left(A / E_1\right)+P\left(E_2\right) & P\left(A / E_2\right)+\ldots \\

& +\ldots \ldots+P\left(E_n\right) P\left(A / E_n\right)

\end{aligned}

\)

or, \(\quad P(A)=\sum_{r=1}^n P\left(E_r\right) P\left(A / E_r\right)\)

For example, Three Bags (The Classic Urn [a mathematical metaphor for a container] Problem)

Suppose you have three bags with the following contents:

Bag \(1\left(E_1\right)\) : 2 white and 3 red balls.

Bag \(2\left(E_2\right)\) : 4 white and 1 red ball.

Bag \(3\left(E_3\right)\) : 3 white and 4 red balls.

You pick a bag at random and then draw one ball. What is the probability that the ball is White (A)?

Probabilities of picking a bag:

Since there are 3 bags, \(P\left(E_1\right)=P\left(E_2\right)=P\left(E_3\right)=1 / 3\).

Conditional probabilities of drawing a white ball from each bag:

\(P\left(A \mid E_1\right)=2 / 5\)

\(P\left(A \mid E_2\right)=4 / 5\)

\(P\left(A \mid E_3\right)=3 / 7\)

Apply the Law of Total Probability:

\(

\begin{gathered}

P(A)=P\left(E_1\right) P\left(A \mid E_1\right)+P\left(E_2\right) P\left(A \mid E_2\right)+P\left(E_3\right) P\left(A \mid E_3\right) \\

P(A)=\left(\frac{1}{3} \cdot \frac{2}{5}\right)+\left(\frac{1}{3} \cdot \frac{4}{5}\right)+\left(\frac{1}{3} \cdot \frac{3}{7}\right)

\end{gathered}

\)

\(

P(A)=\frac{2}{15}+\frac{4}{15}+\frac{1}{7}=\frac{6}{15}+\frac{1}{7}=\frac{2}{5}+\frac{1}{7}=\frac{14+5}{35}=\frac{19}{35}

\)

Example 14: If from each of the three boxes containing 3 white and 1 black, 2 white and 2 black, 1 white and 3 black balls, one ball is drawn at random, then the probability that 2 white and 1 black ball will be drawn is [IIT 1998]

(a) \(\frac{13}{32}\)

(b) \(\frac{1}{4}\)

(c) \(\frac{1}{32}\)

(d) \(\frac{3}{16}\)

Solution: (a) Step 1: List the probabilities for each box

Let \(W_i\) be the event of drawing a white ball from box \(i\), and \(B_i\) be the event of drawing a black ball from box \(i\).

Box 1: \((3 \mathrm{~W}, 1 \mathrm{~B}) \rightarrow P\left(W_1\right)=3 / 4, \quad P\left(B_1\right)=1 / 4\)

Box 2: \((2 \mathrm{~W}, 2 \mathrm{~B}) \rightarrow P\left(W_2\right)=2 / 4=1 / 2, \quad P\left(B_2\right)=2 / 4=1 / 2\)

Box 3: \((1 \mathrm{~W}, 3 \mathrm{~B}) \rightarrow P\left(W_3\right)=1 / 4, \quad P\left(B_3\right)=3 / 4\)

Step 2: Identify the favorable cases

To get exactly 2 White and 1 Black ball, there are three mutually exclusive scenarios:

Case I: White from Box 1, White from Box 2, Black from Box \(3\left(W_1, W_2, B_3\right)\)

Case II: White from Box 1, Black from Box 2, White from Box \(3\left(W_1, B_2, W_3\right)\)

Case III: Black from Box 1, White from Box 2 , White from Box \(3\left(B_1, W_2, W_3\right)\)

Step 3: Calculate the probability for each case

Since the draws are independent, we multiply the probabilities within each case:

Case I: \(P\left(W_1\right) \cdot P\left(W_2\right) \cdot P\left(B_3\right)=\frac{3}{4} \cdot \frac{2}{4} \cdot \frac{3}{4}=\frac{18}{64}\)

Case II: \(P\left(W_1\right) \cdot P\left(B_2\right) \cdot P\left(W_3\right)=\frac{3}{4} \cdot \frac{2}{4} \cdot \frac{1}{4}=\frac{6}{64}\)

Case III: \(P\left(B_1\right) \cdot P\left(W_2\right) \cdot P\left(W_3\right)=\frac{1}{4} \cdot \frac{2}{4} \cdot \frac{1}{4}=\frac{2}{64}\)

Step 4: Add the probabilities

The total probability is the sum of these three disjoint cases:

\(

P(\text { Total })=\frac{18}{64}+\frac{6}{64}+\frac{2}{64}=\frac{26}{64}

\)

Now, simplify the fraction:

\(

P=\frac{13}{32}

\)

Example 15: A pack of cards consists of 15 cards numbered 1 to 15. Three cards are drawn at random with replacement. Then, the probability of getting 20 odd and one even numbered cards is

(a) \(\frac{348}{1125}\)

(b) \(\frac{398}{1125}\)

(c) \(\frac{448}{1125}\)

(d) \(\frac{498}{1125}\)

Solution: (c) To solve this problem, we need to analyze the set of cards and use the Multiplication Theorem for Independent Events, as the cards are drawn with replacement.

Step 1: Analyze the Numbers

In a pack of 15 cards numbered 1 to 15:

Odd numbers \((O)\) : \(\{1,3,5,7,9,11,13,15\}\) – Total \(=8\) cards.

Even numbers \((E)\) : \(\{2,4,6,8,10,12,14\}-\) Total \(=7\) cards.

Since the draws are with replacement, the probability for each draw remains constant:

\(P(O)=\frac{8}{15}\)

\(P(E)=\frac{7}{15}\)

Step 2: Identify the Possible Combinations

We are drawing three cards and want exactly two odd and one even card. (Note: The user prompt mentions “20 odd,” but based on the context of drawing 3 cards and the provided answer, this is a typo for 2 odd).

The even card could be drawn in any of the three positions:

Case 1: \((O, O, E) \rightarrow \frac{8}{15} \times \frac{8}{15} \times \frac{7}{15}\)

Case 2: \((O, E, O) \rightarrow \frac{8}{15} \times \frac{7}{15} \times \frac{8}{15}\)

Case 3: \((E, O, O) \rightarrow \frac{7}{15} \times \frac{8}{15} \times \frac{8}{15}\)

Step 3: Calculate the Total Probability

Since these cases are mutually exclusive, we add them together. Alternatively, we can use the Binomial formula concept: \(\binom{n}{r} \cdot p^r \cdot q^{n-r}\).

\(

\begin{gathered}

P(2 \text { Odd, } 1 \text { Even })=3 \times\left(\frac{8}{15} \times \frac{8}{15} \times \frac{7}{15}\right) \\

P=3 \times \frac{8 \times 8 \times 7}{15 \times 15 \times 15} \\

P=3 \times \frac{448}{3375}

\end{gathered}

\)

Now, simplify by dividing the numerator and the denominator by 3 :

\(3375 \div 3=1125\)

\(

P=\frac{448}{1125}

\)

Baye’s Rule

Theorem (Baye’s Theorem): Let \(S\) be the sample space and let \(E_1, E_2 \ldots, E_n\) be \(n\) mutually exclusive and exhaustive events associated with a random experiment. If \(A\) is any event which occurs with \(E_1\) or \(E_2\) or \(\ldots\) or \(E_n\), then

\(

P\left(E_i / A\right)=\frac{P\left(E_i\right) P\left(A / E_i\right)}{\sum_{i=1}^n P\left(E_i\right) P\left(A / E_i\right)}, i=1,2, \ldots, n

\)

\(

P\left(E_i \mid A\right)=\frac{\text { Probability of choosing path } E_i \text { and getting } A}{\text { Total probability of getting } A \text { through all possible paths }}

\)

For example, The Three Urns (Reversed)

Suppose we have the same urns from our previous “Total Probability” discussion:

Urn 1 (\(E_1\)): 2 White, 3 Black balls.

Urn \(2\left(E_2\right): 4\) White, 1 Black ball.

Urn 3 (\(E3\)): 3 White, 4 Black balls.

The Scenario: A ball is drawn at random and it is found to be White ( \(A\) ). What is the probability it came from Urn 2?

Individual Probabilities:

\(P\left(E_1\right)=P\left(E_2\right)=P\left(E_3\right)=1 / 3\)

\(P\left(A \mid E_1\right)=2 / 5\)

\(P\left(A \mid E_2\right)=4 / 5\)

\(P\left(A \mid E_3\right)=3 / 7\)

Total Probability of White \(P(A)\) :

(We calculated this earlier): \(\sum P\left(E_r\right) P\left(A \mid E_r\right)=19 / 35\)

Apply Bayes’ Rule for Urn 2:

\(

\begin{gathered}

P\left(E_2 \mid A\right)=\frac{P\left(E_2\right) P\left(A \mid E_2\right)}{P(A)}=\frac{\frac{1}{3} \cdot \frac{4}{5}}{\frac{19}{35}} \\

P\left(E_2 \mid A\right)=\frac{4 / 15}{19 / 35}=\frac{4}{15} \cdot \frac{35}{19}=\frac{4 \cdot 7}{3 \cdot 19}=\frac{28}{57}

\end{gathered}

\)

Example 16: The chances of defective screws in three boxes \(A, B, C\) are \(\frac{1}{5}, \frac{1}{6}, \frac{1}{7}\) respectively. A box is selected at random and a screw drawn from it at random is found to be defective. Then the probability that it came from box \(A\) is

(a) \(16 / 29\)

(b) \(1 / 15\)

(c) \(27 / 59\)

(d) \(42 / 107\)

Solution: (d) Let \(E_1, E_2\) and \(E_3\) denote the events of selecting box \(A, B, C\) respectively and \(A\) be the event that a screw selected at random is defective. Then,

\(

\begin{aligned}

P\left(E_1\right) & =P\left(E_2\right)=P\left(E_3\right)=1 / 3 \\

P\left(A / E_1\right) & =\frac{1}{5}, P\left(A / E_2\right)=\frac{1}{6}, P\left(A / E_3\right)=\frac{1}{7}

\end{aligned}

\)

By Baye’s rule, we have Required probability \(=P\left(E_1 / A\right)\)

\(

\begin{aligned}

& =\frac{P\left(E_1\right) P\left(A / E_1\right)}{P\left(E_1\right) P\left(A / E_1\right)+P\left(E_2\right) P\left(A / E_2\right)+P\left(E_3\right) P\left(A / E_3\right)} \\

& =\frac{\frac{1}{3} \times \frac{1}{5}}{\frac{1}{3} \times \frac{1}{5}+\frac{1}{3} \times \frac{1}{6}+\frac{1}{3} \times \frac{1}{7}}=\frac{42}{107}

\end{aligned}

\)

Example 17: In an entrance examination there are multiple choice questions. There are four possible answers to each question of which one is correct. The probability that a student knows the answer to a question is \(90 \%\). If he gets the correct answer to the question, then the probability that he was guessing is

(a) \(\frac{1}{9}\)

(b) \(\frac{36}{37}\)

(c) \(\frac{1}{37}\)

(d) \(\frac{37}{40}\)

Solution: (c) This is a classic entrance exam problem that tests your ability to distinguish between prior probability and posterior probability using Bayes’ Theorem.

Step 1: Define the Events

\(E_1\) : The student knows the answer.

\(E_2\) : The student guesses the answer.

\(A\): The student gets the correct answer.

From the problem, we know:

\(P\left(E_1\right)=90 \%=0.9\)

\(P\left(E_2\right)=1-0.9=0.1\) (Since he either knows it or he doesn’t)

Step 2: Define Conditional Probabilities

\(P\left(A \mid E_1\right)\) : Probability of being correct given he knows the answer. This is 1 (if you know it, you get it right).

\(P\left(A \mid E_2\right)\) : Probability of being correct given he is guessing. Since there are 4 choices and only 1 is correct, this is \(1 / 4\) (or 0.25 ).

Step 3: Apply Bayes’ Theorem

We want to find \(P\left(E_2 \mid A\right)\), the probability that he was guessing given that he got the answer correct:

\(

P\left(E_2 \mid A\right)=\frac{P\left(E_2\right) P\left(A \mid E_2\right)}{P\left(E_1\right) P\left(A \mid E_1\right)+P\left(E_2\right) P\left(A \mid E_2\right)}

\)

Substitute the values:

\(

P\left(E_2 \mid A\right)=\frac{0.1 \times \frac{1}{4}}{(0.9 \times 1)+\left(0.1 \times \frac{1}{4}\right)}

\)

Multiply the numerator and denominator by \(\mathbf{4}\) to clear the fraction:

\(

\begin{aligned}

& P\left(E_2 \mid A\right)=\frac{0.1}{3.6+0.1} \\

& P\left(E_2 \mid A\right)=\frac{0.1}{3.7}=\frac{1}{37}

\end{aligned}

\)

Example 18: A man is known to speak the truth 3 out of 4 times. He throws a die and reports that it is a six. The probability that it is actually a six is

(a) \(3 / 8\)

(b) \(1 / 5\)

(c) \(3 / 4\)

(d) none of these

Solution: (a) Step 1: Define the Events

\(E_1\) : The die actually shows a six.

\(P\left(E_1\right)=1 / 6\)

\(E_2\) : The die shows not a six (\(1,2,3,4\), or 5).

\(P\left(E_2\right)=5 / 6\)

\(A\): The man reports that it is a six.

Step 2: Define Conditional Probabilities

\(P\left(A \mid E_1\right)\) : The probability he reports a six when it is a six. This means he is telling the truth.

\(P\left(A \mid E_1\right)=3 / 4\)

\(P\left(A \mid E_2\right)\) : The probability he reports a six when it is not a six. This means he is lying.

\(P\left(A \mid E_2\right)=1-3 / 4=1 / 4\)

Step 3: Apply Bayes’ Theorem

We want to find \(P\left(E_1 \mid A\right)\), the probability that it is actually a six given that he reported a six:

\(

P\left(E_1 \mid A\right)=\frac{P\left(E_1\right) P\left(A \mid E_1\right)}{P\left(E_1\right) P\left(A \mid E_1\right)+P\left(E_2\right) P\left(A \mid E_2\right)}

\)

Substitute the values:

\(

P\left(E_1 \mid A\right)=\frac{\frac{1}{6} \times \frac{3}{4}}{\left(\frac{1}{6} \times \frac{3}{4}\right)+\left(\frac{5}{6} \times \frac{1}{4}\right)}

\)

Simplify the numerator and denominator:

\(

\begin{gathered}

P\left(E_1 \mid A\right)=\frac{\frac{3}{24}}{\frac{3}{24}+\frac{5}{24}} \\

P\left(E_1 \mid A\right)=\frac{3 / 24}{8 / 24} \\

P\left(E_1 \mid A\right)=\frac{3}{8}

\end{gathered}

\)

The probability that the die actually showed a six is \(3 / 8\).

Binomial Trials and Binomial Distribution

Consider a random experiment whose outcomes can be classified as success or failure. It means that experiment results in only two outcomes \(E_1\) (success) or \(E_2\) (failure). Further assume that experiment can be repeated several times, probability of success or failure in any trial are \(p\) and \(q(p+q=1)\) and do not vary from trial to trial and finally different trials are independent. Such a, experiment is called binomial experiment and trials are said to be binomial trials. For instance, tossing of a fair coin several times, each time outcome would be either a success (say occurrence of head) or failure (say occurrence of tail).

A probability distribution representing the binomial trials is said to binomial distribution. Let us consider a binomial experiment which has been repeated ‘ \(n\) ‘ times. Let the probability of success and failure in any trial be \(p\) and \(q\), respectively in these \(n\)-trials. Now number of ways of choosing ‘ \(r\) ‘ success is in ‘ \(n\) ‘ trials is ” \(C_r\). Probability of ‘ \(r\) ‘ successes and (\(n-r\)) failures is \(p^r q^{n-r}\). Thus, probability of having exactly \(r\) successes is \({ }^n C_r p^r q^{n-r}\).

Let ‘ \(X\) ‘ be a random variable representing the number of successes. Then,

\(

P(X=r)={ }^n C_r p^r q^{n-r}(r=0,1,2, \ldots, n)

\)

Remark

- Probability of utmost ‘ \(r\) ‘ successes in \(n\) trials is \(\sum_{\lambda=0}^r{ }^n C_\lambda p^\lambda q^{n-\lambda}\).

- Probability of at least ‘ \(r\) ‘ successes in \(n\) trials is \(\sum_{\lambda=r}^n{ }^n C_\lambda p^\lambda q^{n-\lambda}\).

- Probability of having first success at the \(r^{t h}\) trial is \(p q^{r-1}\).

Example 19: A die is thrown 7 times. What is the chance that an odd number turns up (i) exactly 4 times, (ii) at least 4 times?

Solution: Probability of success is \(3 / 6=1 / 2\).

\(

\therefore \quad p=\frac{1}{2} \text { and } q=\frac{1}{2}

\)

(i) For exactly 4 successes, the required probability is

\(

{ }^7 C_4\left(\frac{1}{2}\right)^4\left(\frac{1}{2}\right)^3=\frac{35}{128}

\)

(ii) For at least 4 successes, the required probability is

\(

{ }^7 C_4\left(\frac{1}{2}\right)^4\left(\frac{1}{2}\right)^3+{ }^7 C_5\left(\frac{1}{2}\right)^5\left(\frac{1}{2}\right)^2+{ }^7 C_6\left(\frac{1}{2}\right)^6\left(\frac{1}{2}\right)^1+{ }^7 C_7\left(\frac{1}{2}\right)^7

\)

\(

\begin{aligned}

& =\frac{35}{128}+\frac{21}{128}+\frac{7}{128}+\frac{1}{128} \\

& =\frac{64}{128} \\

& =\frac{1}{2}

\end{aligned}

\)

Example 20: An experiment succeeds twice as often as it fails. Then find the probability that in the next 6 trials, there will be at least 4 successes.

Solution: Let \(p\) be its probability of success and \(q\) that of failure. Then \(p=2 q\). Also, \(p+q=1\). It gives \(p=2 / 3\) and \(q=1 / 3\).

\(P\{4\) successes in the 6 trials \(\}\) (\(P(X=r)=\binom{n}{r} p^r q^{n-r}\))

\(

\begin{aligned}

& ={ }^6 C_4 p^4 q^2={ }^6 C_4\left(\frac{2}{3}\right)^4\left(\frac{1}{3}\right)^2 \dots(1) \\

& P\{5 \text { successes in the } 6 \text { trials }\}={ }^6 C_5\left(\frac{2}{3}\right)^5\left(\frac{1}{3}\right) \dots(2) \\

& P\{6 \text { successes in the } 6 \text { trials }\}={ }^6 C_6\left(\frac{2}{3}\right)^6\left(\frac{1}{3}\right)^0 \dots(3)

\end{aligned}

\)

Therefore, the probability that there are at least 4 successes is

\(

\begin{aligned}

& P\{\text { either } 4 \text { or } 5 \text { or } 6 \text { successes }\} \\

& ={ }^6 C_4\left(\frac{2}{3}\right)^4\left(\frac{1}{3}\right)^2+{ }^6 C_5\left(\frac{2}{3}\right)^5\left(\frac{1}{3}\right)+{ }^6 C_6\left(\frac{2}{3}\right)^6 \\

& =\frac{496}{729}

\end{aligned}

\)

Example 21: What is the probability of guessing correctly at least 8 out of 10 answers on a true-false examination?

Solution:

\(

\begin{aligned}

p(X \geq 8) & ={ }^{10} C_8\left(\frac{1}{2}\right)^8\left(\frac{1}{2}\right)^2+{ }^{10} C_9\left(\frac{1}{2}\right)^9\left(\frac{1}{2}\right)^1+{ }^{10} C_{10}\left(\frac{1}{2}\right)^{10} \\

& =\left(\frac{1}{2}\right)^{10}\left[{ }^{10} C_2+{ }^{10} C_1+{ }^{10} C_0\right]

\end{aligned}

\)

\(

\begin{aligned}

& =\left(\frac{1}{2}\right)^{10}[45+10+1] \\

& =\frac{56}{8 \times 2^7} \\

& =\frac{7}{128}

\end{aligned}

\)

Explanation: Step 1: Define the Parameters

Total number of trials (\(n\)): 10 (the number of questions)

Probability of success (\(p\)): \(1 / 2\) or 0.5 (guessing correctly on a true-false)

Probability of failure \((q): 1-p=1 / 2\) or 0.5

Target \((X)\) : At least 8 successes \((X \geq 8)\)

Step 2: The Binomial Formula

The probability of getting exactly \(r\) successes is:

\(

P(X=r)=\binom{n}{r} p^r q^{n-r}

\)

Since \(p=q=1 / 2\), the formula simplifies to:

\(

P(X=r)=\binom{10}{r}\left(\frac{1}{2}\right)^{10}

\)

Step 3: Calculate for \(r=8,9\), and 10

We need to sum the probabilities for 8, 9, and 10 correct answers:

\(

P(X \geq 8)=P(X=8)+P(X=9)+P(X=10)

\)

For \(r=8\) :

\(

\binom{10}{8}=\frac{10 \times 9}{2 \times 1}=45 \Longrightarrow P(8)=45 \cdot \frac{1}{2^{10}}=\frac{45}{1024}

\)

For \(r=9\) :

\(

\binom{10}{9}=10 \Longrightarrow P(9)=10 \cdot \frac{1}{2^{10}}=\frac{10}{1024}

\)

For \(r=10\) :

\(

\binom{10}{10}=1 \Longrightarrow P(10)=1 \cdot \frac{1}{2^{10}}=\frac{1}{1024}

\)

Step 4: Final Sum

\(

P(X \geq 8)=\frac{45+10+1}{1024}=\frac{56}{1024}

\)

Reducing the fraction (dividing by 8):

\(

P(X \geq 8)=\frac{7}{128}

\)